I got a question from a customer asking about whether Microsoft’s “Connected Experiences” are used in some way to “train AI models”. Ultimately, individuals that want an official answer from someone authoritative on issues like these that they can’t find in our online documentation, need to open an advisory ticket with Unified Services. (For customers with Unified Enterprise contracts, this is done via 800 936 3100, https://serviceshub.microsoft.com) Configuration impact & design definition is the domain of support engineering. (Note: If you open a ticket, be specific about whether you’re talking about Connected Experiences with Microsoft 365 Apps, Windows or Edge.)

My Thoughts on Connected Experiences and AI

That said, it’s my opinion that this is just an overarching question of ‘privacy’ & ‘compliance’ & Microsoft’s policies around how customer data is handled. “Machine learning” and model training is a form of data retention/usage & is a cloud service governed by the same privacy rules & restrictions as other services per Microsoft’s commercial agreements. “Machine learning” and AI are not special & do not preclude or make any exception for the requirement to adhere to “Microsoft’s Product Terms” – unless the documentation explicitly states otherwise.

And in some cases, it does “explicitly state otherwise” under a few, select “connected experiences” listed under Connected experiences that analyze your content for the listed services with a superscript [1]. This includes “3D Maps[1]”, “Map Chart[1]”, “Print[1]”, “Research[1]”, “Send to Kindle[1]”, etc. For example, just like all those Google searches people do, when you use “3D Maps”, the “connected experience” is one in which the app function connects to Bing for maps – which does leverage user search requests for improving its map search model. Now, it does actually normalize the search into fundamental elements and keywords to provide a more generalized, non-specific search request, but yes, there is training of the Bing search model based on the request. This is discussed in experiences that rely on Bing.

“Optional Connected Experiences”

Additionally, our documentation on Optional Connected Experiences explicitly state that the privacy of their use is not governed by Microsoft 365’s Product Terms that commercial users are used to but instead is governed by Microsoft’s Services Agreement which are the terms traditionally used for consumers. Those concerned about this difference may want to review each of these experiences to see whether or not these terms of how data is handled impact your organization.

Honestly, most of the features placed under Microsoft’s Services Agreement are quite self-explanatory as to why. For example, “Insert Online Video” requires going beyond the Microsoft’s terms, adhering to 3rd party video services “privacy” & “terms of service” policies, such as that of Google & YouTube.

Per the Optional Connected Experiences for Microsoft 365 Apps documentation:

“These are optional connected experiences that aren’t covered by your organization’s commercial agreement with Microsoft but are governed by separate terms and conditions. Optional connected experiences offered by Microsoft directly to your users are governed by the Microsoft Services Agreement instead of the Microsoft Product Terms.”

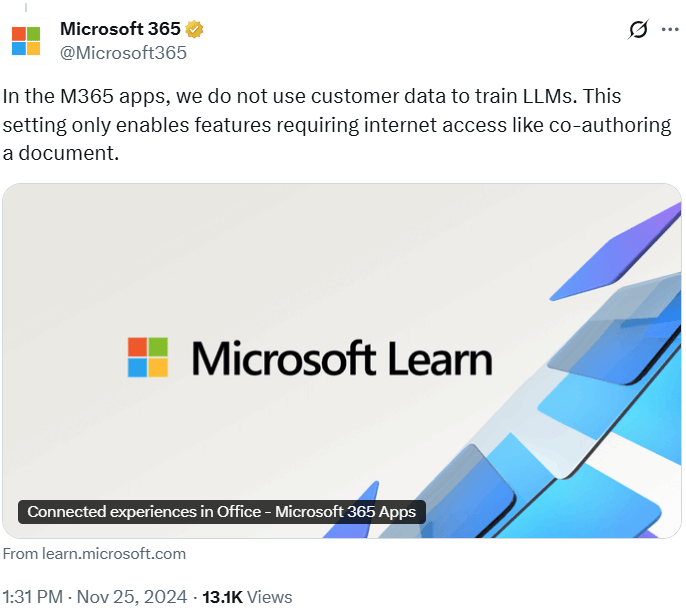

Again, if you would like an official statement, you should open a ticket as I described in the intro to this post. Additionally, there’s this post from the Microsoft account on Twitter:

“In the M365 apps, we do not use customer data to train LLMs. This setting only enables features requiring internet access like co-authoring a document.

https://learn.microsoft.com/en-us/microsoft-365-apps/privacy/connected-experiences“

Comments Off on OPINION: Microsoft Connected Experiences and “machine learning” or “AI model” training

Posted in Uncategorized | Tags: ai, artificial-intelligence, copilot, microsoft, technology

You must be logged in to post a comment.